After 6 months trading 14 hours a day, the session breakdown showed 64% of screen time was actively losing money. One strategy, four sessions, wildly different outcomes. Once Alex cut the unprofitable sessions and traded only London, monthly P&L went from $800 to $2,240 — a 180% increase achieved by trading less, not more.

This case study walks through the exact analysis: what the data showed, why only one session worked for that strategy, the specific change made, and how to replicate the analysis for any market (forex, futures, crypto, equities). The framework is mechanical, not intuitive — and that's why most traders miss it until they look at their own numbers.

"Alex" is a composite profile representing a trading pattern documented across multiple traders using similar momentum-breakout strategies on major FX pairs. Specific P&L figures, trade counts, and session metrics are drawn from real anonymized trading logs. The analysis framework at the end is the replicable core — specific outcomes will vary by strategy, market, and session.

The Background: Trading All Day, Every Day

Alex traded EUR/USD and GBP/USD from 2 AM to 4 PM EST — covering the Asian tail end, full London session, and most of the New York session. That's 14 hours of screen time. He believed more hours meant more opportunities, and more opportunities meant more profit.

After 6 months, results were underwhelming: $800/month average on a $15,000 account. He was working harder than most full-time traders — 14 hours a day — for what amounted to less than $5 per hour of screen time. Something was structurally wrong, and sitting in more hours wasn't fixing it.

The diagnosis came from one question: where, within those 14 hours, was the money actually being made?

The Session Breakdown

Methodology: Tagging Every Trade

Alex tagged each of his 450 trades over the 6-month window by the session when the position was entered. Four session buckets were used, aligned with New York time:

- Asia / Early London — 2:00-3:00 AM EST (late Asian session bleed into London pre-open)

- London — 3:00-8:00 AM EST (core European trading window)

- NY Overlap — 8:00 AM-12:00 PM EST (London-NY dual liquidity)

- NY Afternoon — 12:00-4:00 PM EST (post-London, NY-only)

The Numbers That Broke the Assumption

| Session | Hours Traded | Trade Count | Win Rate | Expectancy / Trade | Monthly P&L |

|---|---|---|---|---|---|

| London (3-8 AM EST) | 5 hrs | 180 | 57% | +$62 | +$2,790 |

| NY Overlap (8 AM-12 PM) | 4 hrs | 150 | 50% | +$8 | +$300 |

| Asia / Early London (2-3 AM) | 1 hr | 45 | 36% | -$41 | -$460 |

| NY Afternoon (12-4 PM) | 4 hrs | 75 | 40% | -$48 | -$900 |

The data was unambiguous. London produced all the profit — $2,790/month with a 57% win rate and $62 per-trade expectancy. NY Overlap was barely above breakeven. Asia and NY Afternoon were both deeply negative.

Alex was spending 9 hours per day (Asia + NY Overlap + NY Afternoon) to generate -$1,060/month, and 5 hours per day (London) to generate +$2,790. The 14-hour days were producing worse results than the 5-hour London-only window would have alone.

The uncomfortable realization: 64% of Alex's screen time was actively losing money. He wasn't just wasting time on unprofitable sessions — he was paying the market to sit in front of his computer.

Why London Worked and Others Did Not

Alex's strategy was a momentum breakout system: enter on clean breaks of the Asian-session range during London, ride the directional flow as European institutional money hit the market.

The Three Conditions

The strategy required three market conditions to function:

- A defined range to break out of — the Asian session range established overnight

- Directional momentum — institutional flow entering at the London open

- Sufficient liquidity — tight spreads, clean fills, minimal slippage

During London (3-8 AM EST), all three conditions were consistently present. Tighter spreads, larger order books, and the highest daily FX turnover (London is the biggest FX trading center globally per BIS Triennial Survey data). Each condition individually matters; together they produce the environment a breakout strategy is built for.

Why Asia Failed

During the early Asian window, there was no breakout range yet — Alex was attempting to trade the range itself, which his breakout strategy wasn't designed for. Range-bound markets require fade strategies, not breakout strategies. The tool was wrong for the environment.

Why NY Afternoon Failed

By NY afternoon, institutional momentum had faded, liquidity thinned as European desks closed, and clean directional moves were replaced by choppy consolidation. The breakout strategy took false signals repeatedly — spread widened, fills were bad, and the directional flow that powered the strategy during London was simply absent.

Alex's strategy wasn't bad in Asia and NY afternoon — it was irrelevant. Like using a fishing rod in a swimming pool: the tool works, but the environment doesn't support it.

The Change: One Rule, 60% Fewer Hours

Alex made a single change: trade only the London session (3-8 AM EST) plus the first hour of the NY Overlap (8-9 AM EST). Total screen time dropped from 14 hours to 6 hours per day — a 57% reduction.

What Freed Hours Got Used For

- Pre-session analysis — identifying Asian range levels, drawing key zones, flagging high-impact news

- Post-session journal review — grading each trade, noting setup quality, flagging behavioral anomalies

- Physical recovery — exercise, proper meals, sleep to offset the toll of 14-hour screen days

- Strategy research — backtesting refinements, journal analysis, reading order-flow studies

Cutting hours freed bandwidth for the work that actually improves a trader's edge — something 14-hour days had eliminated entirely.

Three-Month Results

| Metric | Before (All Sessions) | After (London Only) | Change |

|---|---|---|---|

| Monthly P&L | $800 | $2,240 | +180% |

| Screen time/day | 14 hours | 6 hours | -57% |

| Trades/month | 75 | 42 | -44% |

| Win rate | 48% | 58% | +10pp |

| Expectancy/trade | $10.67 | $53.33 | +400% |

| $/hour of screen time | $3.80 | $24.90 | +555% |

Why Expectancy Jumped 400% (Not Just 50%)

The expectancy gain wasn't incremental. Cutting losing sessions didn't just remove their losses — it removed the drag they imposed on the overall statistics. Every remaining trade was now in conditions the strategy was designed for, so per-trade outcomes compounded upward while the losing sessions stopped diluting the numbers below.

What the Win Rate Jump Actually Represented

The 10-percentage-point win rate jump (48% → 58%) wasn't because Alex became a better trader in three months. It was because the trades that had been dragging the win rate down were no longer being taken. Filtering out the wrong environment is often indistinguishable from a genuine skill improvement — in outcome, though not in cause.

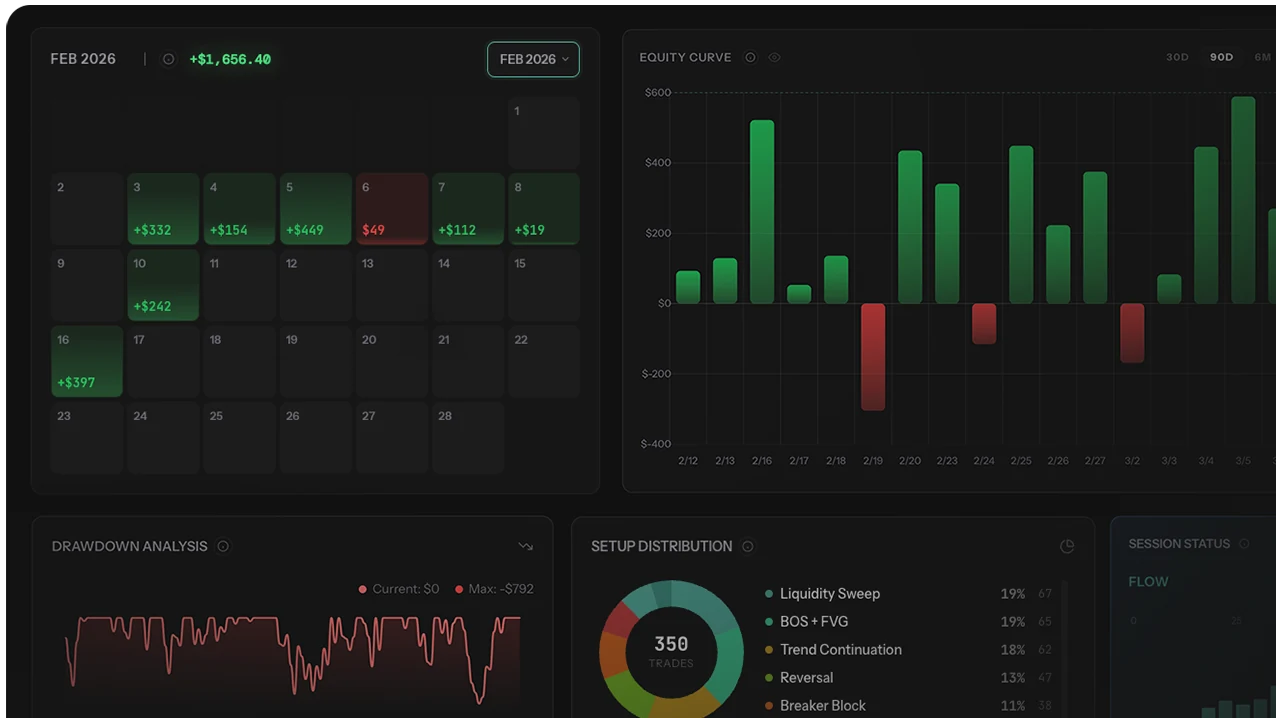

Running this analysis manually is possible but painful. Tagging 450 trades by session, calculating expectancy per bucket, and tracking trade counts across months typically takes 3-4 hours of spreadsheet work. Modern trading journals automate the session-breakdown calculation once trade timestamps are imported — the journal comparison guide covers which ones handle session analytics natively.

3 Mistakes Traders Make Applying Session Filters

Mistake 1: Cutting on One Month of Data

A single bad month in a session isn't a structural problem — it could be a trending macro regime, a concentrated losing streak, or statistical noise. Traders who cut a session after one month often regret it within 60-90 days when the session returns to its true expectancy. Require at least 60 days and 30+ trades per session before acting.

Mistake 2: Confusing "Can't Trade" With "Won't Trade"

Some traders declare a session unprofitable because they can't wake up at 3 AM to trade London, rather than because their strategy genuinely underperforms there. That's not a data-driven decision — it's a preference wearing data clothing. Be honest about which constraint is actually driving the decision: environment incompatibility with strategy (structural) or willingness to be at the desk (personal).

Mistake 3: Applying the Same Filter to Different Strategies

Session performance is strategy-specific. A momentum breakout strategy fails in Asia for structural reasons (no breakout range). A range-fade strategy would thrive there. Running one strategy's session analysis and applying its conclusions to a different strategy is a category error. Each strategy needs its own session breakdown.

How to Find Your Best Session (4 Steps)

Replicate the analysis in four steps:

- Tag every trade by session for 60 days. Use time-based tags: Early Asian, Late Asian, London Open, London Main, NY Overlap, NY Afternoon, NY Close. Be specific — different windows within a session can perform very differently. If your journal auto-imports from your broker, timestamps are already there; you just need to apply a session-bucket tag.

- Calculate per-session metrics. Win rate, average winner, average loser, expectancy, trade count for each session tag. Minimum viable analysis: 30 trades per session.

- Identify your best and worst sessions. Look for sessions with significantly different expectancies. A $50+ gap in per-trade expectancy across 30+ trades is meaningful. A $5 gap with low trade counts is noise.

- Test a session filter. For one month, trade only the best 1-2 sessions. Compare to the previous equivalent period. If profits improve and the gap persists month over month, keep the filter. If it reverses, sample size was too small.

The analysis works for any market: forex sessions, US stock market hours (pre-market, open, midday, close), crypto sessions (Asia, Europe, US), and futures sessions (RTH vs ETH). The principle is universal: find where the edge lives and stop trading where it doesn't.

Who Should Skip Session Filtering

Session filtering isn't universally applicable. Specific trader profiles get limited value — or negative value — from this exercise:

- Scalpers taking 50+ trades/day. High-frequency strategies average out session differences. Session expectancy becomes less meaningful when the trade count inside any single day already exceeds most traders' monthly volume.

- Swing traders holding multi-day positions. If trades are held for days, the entry session rarely drives the outcome. Macro context and trade management matter far more than which hour entry happened in.

- Crypto traders on 24/7 markets. Crypto has no equivalent of the London open or NY close. Session analysis in crypto requires custom bucketing by exchange activity, funding windows, or derivatives flow — not the traditional FX sessions.

- Traders with fewer than 150 total trades. The math doesn't work. Four session buckets with 30 trades each requires 120+ total trades minimum. Below that, any verdict is likely noise.

- Traders of a single strategy in a single session already. If trading only happens during one specific window (e.g., NY open only), there's nothing to filter — the filter is already implicit.

For these profiles, the useful analysis is usually setup performance (not session), market condition (trending vs ranging), or behavioral patterns (revenge trading, overtrading) rather than time-of-day filtering.

Final Verdict: Let Data Choose Your Hours

The instinct that more screen time produces more profit is wrong for most strategies. Each strategy has environmental conditions it was built for. Outside those conditions, the strategy isn't bad — it's irrelevant. And irrelevant trades aren't neutral; they cost spread, commission, and behavioral capital.

Alex's 180% monthly P&L improvement came from doing less: fewer hours, fewer trades, same strategy, better environment. The 555% jump in hourly screen-time earnings is the headline number that matters most — it's what makes this sustainable rather than another form of burnout.

Three principles from this case study:

- Screen time without edge is a cost, not an asset. Most traders accumulate losing screen time without measuring it.

- Sample size matters more than conviction. 450 trades per session-bucket decision; 60 days minimum; at least $50 expectancy gap before cutting.

- The filter must match the strategy. Session analysis is strategy-specific — one strategy's best session is another strategy's worst.

For more data-driven performance improvements using the same analytical lens, see the calendar heatmap case study, the AI coach analysis case study, and the overtrading cost analysis for session-based spread optimization that complements this approach.