P/L tells you what happened. Trade quality tells you why. By grading every trade A, B, or C based on process discipline rather than outcome, you separate luck from skill — and find your real edge. The fundamental insight: an A-grade trade that loses money is better for long-term performance than a C-grade trade that makes money. The A-grade loss was correct execution with an unfavorable outcome (one of the necessary losses every positive-expectancy strategy produces); the C-grade win was incorrect execution that happened to land profitable. Only the first is repeatable. Across observational data, traders who shift from 30% to 60% A-grade execution typically see monthly P/L improve by 50-150% — without strategy changes, just by reducing C-grade rule breaks.

This guide covers the A-B-C grading framework with explicit per-grade criteria, the rules for consistent honest grading that prevent self-serving distortion, the analysis of quality data showing how A-grade vs C-grade per-trade expectancy diverges, the four common patterns observed in retail trader quality data, the four-step process for shifting your distribution toward A-grades, and the psychological resistance most traders experience when their data tells them their best month was actually a discipline failure.

Trade quality grading framework draws from process capability analysis in manufacturing quality control, adapted to discretionary trading. The process-vs-outcome distinction references decision-theory literature on outcome bias in judgment under uncertainty. Specific dollar figures and grade-distribution percentages illustrate typical patterns observed across active retail traders in the TSB journal user base; individual trader patterns vary substantially based on strategy, sample size, and grading rigor.

The principle in one sentence: An A-grade trade that loses money is better for your long-term account than a C-grade trade that makes money. The A-grade loss was correct execution with an unfavorable outcome. The C-grade win was incorrect execution with a lucky outcome. Only one of those is repeatable.

Why P/L Alone Is Misleading

A trader makes $500 on a trade. Good result? Maybe. If the trade followed every rule in their plan with proper sizing and stop placement, then yes — the process was sound and the outcome confirmed it. If the trade was an impulse entry with no stop loss that happened to go the right direction, then the $500 is borrowed money. The market will take it back.

The Outcome-Bias Problem

Decision-theory literature documents outcome bias: humans evaluate decisions based on their results rather than the quality of the decision-making process. This is psychologically natural but produces systematically wrong conclusions in domains where luck affects outcome — like trading. A reckless trader can outperform a disciplined one over a 30-trade sample because variance dominates skill at small samples. Over 200+ trades, process quality becomes the only reliable predictor of results.

The Solution: Separate Process From Outcome

Trade quality scoring fixes the outcome-bias problem by creating a separate measurement layer. You track both what happened (P/L) and how it happened (quality grade). The relationship between these two metrics reveals your actual edge — not the illusion of one.

The A-B-C Grading Framework

Keep the system simple. Three grades, clear criteria, assigned immediately after each trade — before seeing post-exit price movement.

A-Grade: Perfect Process

Every element of your trading plan was followed. Criteria:

- All entry conditions met before clicking buy/sell

- Position size calculated correctly (within 10% of ideal)

- Stop loss placed at the planned level

- Take profit or exit rules followed as written

- No emotional interference — the trade was planned before market hours or met all criteria during live analysis

An A-grade trade can lose money. That's fine. The loss was the cost of a correct decision — one of the necessary losing trades that every positive-expectancy system produces. Across observational data, A-grade trades win at roughly the strategy's underlying win rate; the wins compound and the losses are absorbed within normal variance. The strategy works as designed when graded execution matches A-grade criteria.

B-Grade: Minor Deviations

The trade was fundamentally sound but had one or two minor imperfections:

- Entry was slightly early or late (within 30% of ideal entry zone)

- Position size was off by 10-25% (slightly too large or too small)

- Stop was adjusted once during the trade (moved to breakeven too early or too late)

- Exit was slightly premature or held slightly too long versus the plan

B-grade trades are acceptable. Minor deviations are human and don't significantly affect long-term expectancy. The goal isn't to eliminate B-grades but to minimize them. A trader with 20-30% B-grade frequency is operating normally; one with 50%+ B-grade frequency has consistency issues that compound into edge erosion.

C-Grade: Rule Breaks

One or more major rules were broken. Criteria for C-grade:

- Entered without meeting primary entry criteria (impulse trade)

- No stop loss placed, or stop was removed during the trade

- Position size exceeded maximum risk (more than 25% over plan)

- Revenge trade — entered specifically to recover a previous loss

- Traded outside planned hours, pairs, or conditions

- No written plan existed before entry

C-Grade = Warning Signal: A C-grade trade isn't just a bad trade — it's a process failure indicating emotional interference or discipline breakdown. Two or more C-grade trades in a day should trigger your stop rule. C-grades cluster — one leads to another because the same emotional state that caused the first persists into subsequent trades.

Rules for Consistent Honest Grading

The grading system only works if applied honestly and consistently. Without these four disciplines, the data becomes garbage and the entire framework collapses.

Rule 1: Grade Immediately

Assign the grade within 5 minutes of closing the trade, before seeing how price moves after your exit. If you grade hours later, you'll unconsciously adjust based on what happened post-exit — winners get inflated grades, losers get deflated ones. This is outcome bias contaminating the process measurement that's specifically designed to remove it.

Rule 2: Grade Based on What You Knew at Entry

If you entered at support with all criteria met and the trade lost money because support broke, that's still an A-grade trade. The outcome was unfavorable, but the decision was correct based on available information. If you wouldn't change anything about the entry decision knowing only what you knew at entry time, the grade is A regardless of the outcome.

Rule 3: Be Honest About C-Grades

The natural temptation is to upgrade C-grades to B because admitting a rule break feels uncomfortable. Fight this. The data only works if labels are accurate. A dishonest grading system produces dishonest conclusions, and the trader continues thinking they have better discipline than they do. Honest C-grades are the most valuable data points in your journal because they identify the specific failure modes that compound into long-term edge erosion.

Rule 4: Write One Sentence Explaining the Grade

Next to every grade, note why: "A — all criteria met, entered at support, 1% risk, stop below structure." Or: "C — impulse entry, no setup, frustrated after previous loss." The notes make weekly review 10x more useful by surfacing the specific patterns that drive grade distribution. Without notes, grades are abstract; with notes, they become diagnostic.

How to Analyze Your Quality Data

After 50+ graded trades, create this comparison table:

Grade-Level Performance Decomposition

| Grade | Count | Win Rate | Avg Winner | Avg Loser | Expectancy/Trade | Total P/L |

|---|---|---|---|---|---|---|

| A-Grade | 28 | 58% | $145 | $82 | +$51 | +$1,428 |

| B-Grade | 15 | 47% | $120 | $95 | +$6 | +$90 |

| C-Grade | 7 | 29% | $85 | $130 | −$67 | −$469 |

What the Data Reveals

This example reveals a clear pattern. A-grade trades have a $51 per-trade expectancy. C-grade trades have a −$67 per-trade expectancy. The trader's entire edge comes from A-grade execution. Every C-grade trade they eliminate is worth $67 in expected value to their account.

The Improvement Math

If this trader could convert all 7 C-grade trades into A-grade trades, monthly P/L would improve by approximately $826 — from +$1,049 to +$1,875. That's a 78% improvement without changing the strategy at all. The leverage isn't in finding new setups or better entries; it's in eliminating the rule breaks that destroy edge already present in the data.

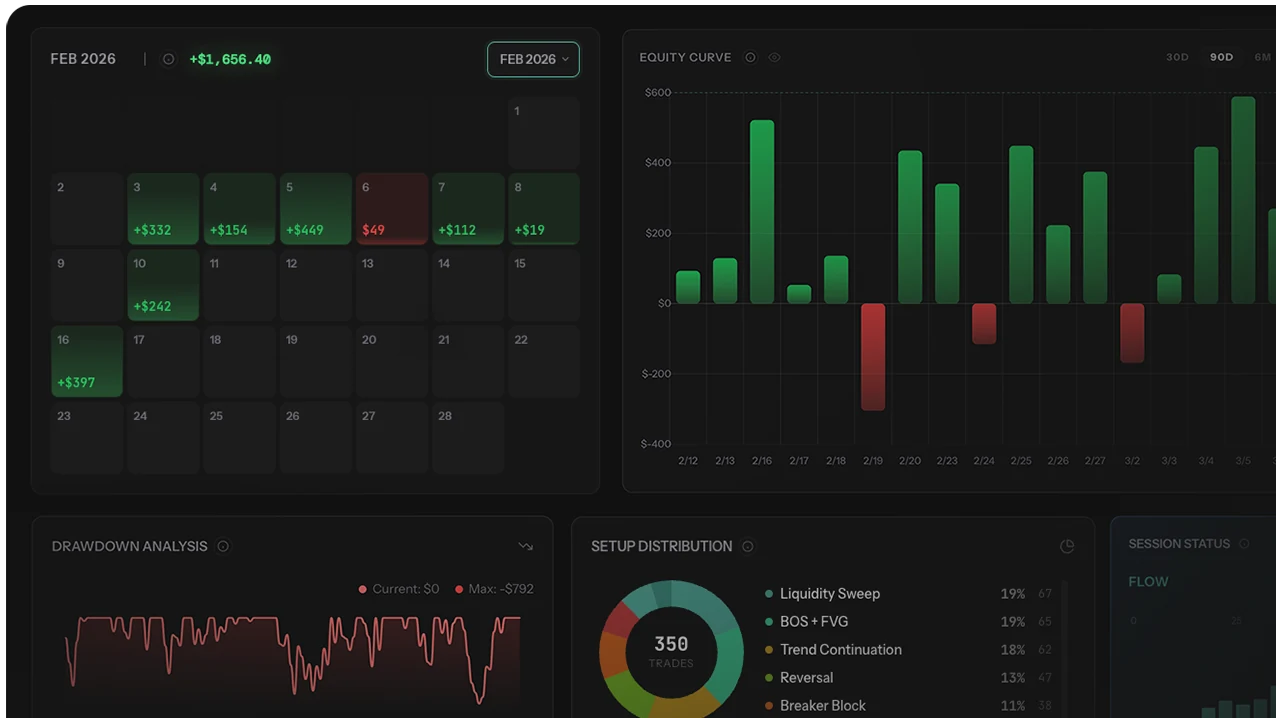

Quality grading infrastructure is the precondition for the analytical leverage. Manual grading in spreadsheets works for simple cases but breaks down once you want to filter by grade × setup × session combined. Automated journals with built-in grade fields enforce same-session entry, lock grades after submission, and produce grade-level decomposition automatically. The trading journal comparison covers which journals support quality-grading natively. The paired trade quality vs P/L analysis covers how the grading data feeds equity curve filtering, and the impact analysis covers the quantitative tool for cutting C-grade trades systematically.

Four Common Patterns in Quality Data

After reviewing quality data from active retail traders, four patterns recur consistently:

Pattern 1: C-Grade Trades Cluster Around Losses

Most C-grade trades follow a losing trade. The loss triggers frustration, which triggers an impulsive entry. The same emotional state persists into subsequent trades, producing chains of C-grade entries. The fix: a cooling period between trades — even 5 minutes of non-trading activity breaks the cycle. Hard rule: no new trade within 5 minutes of a loss exceeding 1.5% of account.

Pattern 2: B-Grade Trades Happen at Predictable Times

Many traders take B-grade trades during the first and last 30 minutes of their session. Early trades are often premature (entering before confirmation completes). Late trades are often forced (needing to find a trade before session ends). Adjusting session windows by 30 minutes — start 30 min later, stop 30 min earlier — frequently converts 50-70% of B-grades to A-grades.

Pattern 3: A-Grade Win Rate Is 10-20% Higher Than Overall Win Rate

When a trader follows their rules perfectly, the strategy performs as designed. The gap between A-grade win rate and overall win rate represents the cost of imperfect execution. A trader with 50% overall win rate but 60% A-grade win rate is losing 10 percentage points of expected performance to discipline failures. The strategy isn't broken; the execution is.

Pattern 4: A-Grade Frequency Predicts Forward Performance

Traders with stable 50%+ A-grade frequency typically maintain or improve performance over the next 90 days. Traders with declining A-grade frequency over consecutive months typically see performance deterioration follow within 60-90 days. A-grade percentage is a leading indicator of forward P/L; tracking the trend is more diagnostic than tracking current P/L because P/L lags execution quality by approximately one month.

Your real strategy performance is your A-grade performance. Everything else is noise added by imperfect execution. If your A-grade trades are profitable but overall results are break-even, the problem isn't your strategy — it's your discipline. The fix is execution quality, not strategy redesign.

How to Increase Your A-Grade Percentage

The goal is to shift trade distribution toward A-grades over time. Realistic target: 60%+ A-grade trades within 3 months of consistent grading.

Step 1: Identify Your C-Grade Triggers

Review all C-grade trades from the last month. What caused each one? Common triggers: revenge after a loss, boredom during slow markets, FOMO on a fast move, trading outside planned hours. Name the triggers explicitly. The grade reasons (Rule 4 above) make this analysis trivial — read through the C-grade notes and the recurring triggers reveal themselves.

Step 2: Create a Pre-Trade Checklist

Before every trade, verify: Does this meet my entry criteria? Is the position size correct? Is my stop loss placed? Am I within my planned session? Am I in an emotionally stable state (no recent loss-driven frustration)? If any answer is no, don't take the trade. A 15-second checklist eliminates most C-grade entries before they happen.

Step 3: Review Grades Weekly

Every Sunday, look at the week's grades. What percentage were A? Were there C-grades? What triggered them? Is the A-grade percentage improving week over week? Track the trend, not just the current week. The improvement signal is in the trajectory across 4-8 weeks.

Step 4: Focus on Process Streaks

Instead of tracking winning/losing streaks, track consecutive A-grade trades. Try to set a personal record for consecutive A-grade trades. This shifts focus from outcomes (which you can't control) to process (which you can). Process streaks are achievable; outcome streaks are luck-dependent.

Process Streak Challenge: Set a goal of 10 consecutive A-grade trades. It doesn't matter if they win or lose — only that each one followed your rules perfectly. When you achieve 10, go for 15. This reframes daily goal from "make money" (which depends on luck) to "execute well" (which depends on discipline).

3 Mistakes Traders Make With Quality Grading

Mistake 1: Grading After Seeing Outcome

The most common error. Grading hours after the trade closes — or worse, the next day — allows outcome bias to contaminate the grade. Winners get rationalized into A-grades; losers get downgraded to B or C even when the process was actually fine. The framework only works with same-session grading, ideally within 5 minutes of close. If you can't grade immediately, the grade isn't trustworthy and shouldn't be added to the analytical dataset.

Mistake 2: Inflating Grades to Feel Good

Self-serving grading defeats the framework's entire purpose. A B-grade called A produces no diagnostic information; the trader continues thinking they have better discipline than they do. Honest grading is uncomfortable — most traders running rigorous grading discover their A-grade rate is 35-50% rather than the 70%+ they assumed. The discomfort is the data point. Inflated comfort produces no improvement.

Mistake 3: Optimizing Grade Distribution Without Checking Per-Grade Expectancy

Some traders chase A-grade percentage as the primary metric, but the goal is positive expectancy on A-grades. If A-grade trades show negative expectancy in your data, the grading criteria don't match a profitable strategy — adjusting criteria is required, not just shifting distribution. Always check: A-grade expectancy > 0, B-grade expectancy ≥ 0, C-grade expectancy < 0. If A-grade expectancy isn't materially positive, the framework is mis-calibrated and grading more A-grades won't fix performance.

Who Should Skip Quality Grading (For Now)

- Traders without a written trading plan. Grading requires explicit criteria to grade against. Without a written plan defining entry conditions, position sizing, and stop placement, grades become subjective opinions rather than measurements. Build the plan first; grade against it second.

- Traders averaging fewer than 20 trades per month. Grading infrastructure is overhead that produces value at higher trade frequency. At low frequency, post-trade review of each individual trade with full chart analysis is more diagnostic than abstract A/B/C grading.

- Algorithmic traders. Systematic strategies don't have discretionary execution to grade — they have deterministic rules that either fired or didn't. Algorithmic equivalent is rule-firing rate (% of signals taken correctly), not subjective quality grading.

- Traders mid-strategy-transition. If you've changed entry rules in the last 30 days, grading criteria don't yet have stable definition. Stabilize the strategy first; grade against the stable version, not the moving target.

- Traders unwilling to grade C honestly. The framework requires honest acknowledgment of rule breaks. Traders who can't bring themselves to mark trades as C-grades despite clear rule violations should skip the framework entirely — inflated grading produces no value and may be worse than no grading because it creates false confidence.

Integrating Quality Scores Into Your Journal

Quality scoring works best when built into trade logging workflow. For every trade, log:

- Standard trade data: instrument, direction, entry, exit, stop loss, position size, P/L

- Quality grade: A, B, or C

- Grade reason: One sentence explaining the grade

- Screenshot: Chart at time of entry with your annotations

Over time, your journal becomes a performance database. You can filter by grade and see exactly what your trading looks like at different quality levels. See the journal examples guide for real-world layouts and the journal field structure guide for the data inputs required.

The Review Priority Inversion

When reviewing, always look at grade distribution first and P/L second. A month with 70% A-grades and a small loss is better than a month with 30% A-grades and a small profit. The first was good trading with bad luck (sustainable, repeatable); the second was bad trading with good luck (unsustainable, unrepeatable). The first is on path to long-term profitability; the second is on path to account blow-up despite the favorable current month.

Methodology Note

- Grading framework: Standard practice in process-quality measurement adapted from manufacturing process capability analysis to discretionary trading.

- Process-vs-outcome distinction: Draws from decision-theory literature on outcome bias in judgment under uncertainty. The framework specifically isolates the decision-quality dimension that outcome-based evaluation conflates with luck.

- Sample size requirement: 50+ graded trades for moderate-confidence per-grade analysis. Grade distribution stabilizes after 100+ trades; early grade analysis can be skewed by recency bias and grading practice variation.

- Grading rigor as precondition: The framework's diagnostic value depends entirely on grading honesty. Inflated grading (typically 70%+ A-grades) produces no actionable data. Realistic disciplined-trader distributions: 40-60% A, 25-40% B, 5-15% C.

- Forward applicability: A-grade percentage trend is a leading indicator of forward P/L (~30-60 day lead time). Tracking trend matters more than current snapshot for predictive use.

For our full editorial process, see our editorial methodology.

Final Verdict: Grade the Process, Track the Outcome

P/L is what happened. Quality is why. The two metrics measure different things, and only the combination produces honest diagnostic. A trader judging only by P/L can't distinguish luck from skill at small sample sizes, can't identify which executions to scale and which to fix, and can't separate strategy issues from discipline issues. Quality grading creates the missing measurement layer that makes those distinctions possible.

The single biggest mistake in quality grading is inflation — softening grades to feel comfortable, contaminating the dataset that's specifically designed to surface uncomfortable truths. Honest grading is genuinely uncomfortable; most disciplined traders running rigorous grading discover their A-grade rate is 35-50% rather than the 70%+ they assumed. The discomfort is the data; protect it from your own self-serving distortion or the framework produces nothing.

Three principles from the framework:

- An A-grade loss beats a C-grade win. Process beats outcome at any sample size beyond 50-100 trades. Optimize the process; the outcomes follow.

- Grade immediately or don't grade. Hours-later grading allows outcome bias to contaminate the measurement. Same-session grading is the precondition for honest data.

- A-grade percentage trend leads forward P/L. Track the trajectory across 4-8 weeks; declining A-grade frequency predicts performance deterioration 30-60 days ahead. Act on the leading indicator, don't wait for P/L confirmation.

For related analysis: trade quality vs P/L analysis for the equity curve filtering that quality grades enable, impact analysis for quantitatively cutting C-grade categories, edge measurement framework for the underlying expectancy math, trade review guide for the weekly process that builds grading habits, risk management framework for the position-sizing rules that determine grade criteria, and journal field structure guide for integrating grading into your data capture workflow.